Ultimate List of 22 Must-Know SaaS Contracts and Documents

Struggling with SaaS Contracts? See our list with the 22 Most Common Contracts and Documents used for most SaaS below, including explanations. All businesses use technology called software-as-a-services (SaaS). For example: Microsoft 365, Google Workspace, Salesforce, Zoom, Shopify, Slack, Atlassian etc. At the same time, many companies develop and sell SaaS too. Behind these products and services, there are many different types of contracts and documents commonly used in SaaS business arrangements. See below a list of the most used SaaS Contracts, you can use it as a SaaS Contract Checklist or SaaS Contract Framework.

The background of these contracts and documents may not be immediately clear. However, even a basic knowledge of these contracts can give your business a strong advantage, whether you are acting as the seller (vendor) or the buyer (customer) of SaaS. We will explain some confusion linked to SaaS and Tech contracts, like MSA (Master Service Agreement), Terms of Use, AI Addenda, Order Form, SOW (Statement of Work) and Service Level Agreement (SLA) through a comprehensive list of top-tier SaaS and related document resources. This is a follow up on our previous article on this topic, linked here).

What We Will Cover

- What SaaS is and what SaaS contracts and documents mean

- Reasons for non-legal to get familiar with SaaS and tech contracts

- Explanations of the 22 most common SaaS & tech contracts and its functions

- Quick Summary & Next Steps

What is SaaS and What Are SaaS Contracts?

Everyone talks about SaaS, but what does SaaS and related terms mean? In line with this, we would like to walk through the definition along with examples of SaaS to clearly pinpoint the topic and explain why we believe that knowledge of related contracts are relevant.

Explanation of what SaaS is

“SaaS” is an abbreviation of the full concept “Software-as-a-service”. Essentially it refers to a subscription-based software that works through a cloud that is provided as a service. Well, what does this mean then? Practically speaking, this means that you don’t have to install or maintain anything on your computer to use it. The only thing you need is Internet access. In other words, the software is not purchased like in a traditional sales situation where you exchange money for an actual product that you become the owner of. Instead, SaaS is owned, hosted and managed by the vendor, who deliver the software to you as a service. This enables remote access for SaaS users, who gets a right to use, or lease, the software for a monthly/annual fee. For vendors, SaaS constitute a business model deviating from the traditional sales models.

For example, some commonly known software, which also are considered to be SaaS, are Google, Microsoft 365, Salesforce, Adobe, and Zoom etc. In other words, it is not for what you use the software that makes it SaaS. The deciding factor to whether software is SaaS or not depends on how you use it, i.e. online without further downloading steps or transfer of ownership of the software itself to the users. Due to this seemingly simple provision of software as a services, SaaS is a well-used business model today.

In sum, SaaS is a business model that allows remote provision of software, usually on subscription basis. However, for overall operational and innovative benefits of SaaS, contracts play a crucial role. (For further insights of research related to SaaS and its efficiency, see this article here.)

In short, SaaS is a business model that allows remote provision of software, usually on subscription basis.

SaaS contracts and documents

Just like any purchase, using SaaS requires having a binding legal contract between the SaaS vendor/provider and the customer/user. This contract sets out the terms and conditions of the software subscription and regulates the relation between a software provider/vendor and a customer who is subscribing to use the online software. In practice, SaaS Agreements have various names, such as Master Agreement, Subscription Agreement, End-user License Agreement (EULA), and (SaaS) License Agreement, etc. The naming of the contract may vary, but there are generally speaking certain contracts that govern the same specific item.

Thus, when speaking of “SaaS contracts and documents” it refers to the legal agreements and documentation involved in a subscription of SaaS. Generally, these contracts and documents outline the following items:

- the terms and conditions of service provision,

- usage rights,

- data protection,

- liability,

- payment terms, and

- other crucial aspects of the SaaS relationship between the service provider (vendor) and the customer.

Every item listed above is not necessarily covered by every contract or document though. As a result, the contractual framework for most vendor/buyer relationship will have these items covered in one or (usually) more contracts. Evidently, using SaaS may involve numerous contracts and documents of different character. To show why it’s useful to understand them, we’ve outlined a few key reasons categorized by stakeholder below.

Why this is relevant?

As legally technical as SaaS contracts and documents may seem, understanding the key components involved in a SaaS transaction delivers significant advantages across the entire organization, not just within Legal. Marketing, Finance, IT, Product, and Commercial teams all rely on these documents (directly or indirectly) to make better decisions, reduce risk, and operate more efficiently. Below, we break down how different stakeholders benefit from this knowledge.

Risk Management & Compliance

A solid understanding of contract terms allows teams to spot financial, operational, and legal risks early. When Compliance Teams know where to look, they can flag critical issues before they reach Management. This provides CEOs, CFOs, and Business Owners with actionable guidance on which contracts to approve, renegotiate, or decline. Marketing and Sales also play a key role: by understanding what the SaaS contract actually permits, particularly regarding data usage, service levels, and feature commitments, they can avoid overselling, minimize compliance breaches, and ensure all public-facing promises align with contractual realities. Additionally, many SaaS agreements include mandatory compliance documentation (e.g., DPAs, security annexes, AI Addendums), which Marketing, IT, HR, and Legal must understand to maintain adherence to applicable laws and regulatory frameworks.

Financial Implications

Business Owners, CFOs, and Finance Teams gain substantial value from knowing which SaaS documents govern pricing, auto-renewals, minimum commitments, and price increases (typically the Order Form, MSA/MOA, and pricing annexes). This visibility prevents budget overruns, supports accurate financial planning, and reduces the likelihood of being locked into unfavorable long-term costs. Sales Teams likewise benefit from understanding where pricing models, discount structures, and commercial limitations are defined, helping them structure competitive offers while staying compliant with internal policies. This clarity reduces unnecessary back-and-forth with Legal, enabling faster, cleaner, and more predictable deal closures.

Strategic Decision-Making & Customer Relations

Contracts often contain terms that shape long-term business strategy. Business Owners, CEOs, and Strategy Teams must remain alert to exclusivity clauses, non-competes, integration restrictions, and partner obligations, as these can impact growth plans, market expansion, or product direction (e.g., General Terms & Conditions and/or MSA/MOA). Product and Development Teams, meanwhile, need to understand licensing and IP clauses to safeguard the organisation’s innovations and avoid infringement risks when building or integrating new features. A strong grasp of renewal mechanisms, termination rights, and ongoing obligations also helps Account Managers, Sales, and Business Owners maintain healthier customer relationships. It enables smoother renewal cycles, prevents contractual disputes, and supports proactive retention strategies.

Operational Efficiency

IT, Procurement, and Business Operations Teams rely heavily on understanding what the contract actually promises in practice. Clarity around service scope, uptime guarantees, support obligations, and maintenance procedures improves vendor management and operational planning (typically found in Order Form/SOW, SLA and MSA/MOA and other agreements). Customer Success and Support Teams benefit from knowing support boundaries, and response times in SLAs, allowing them to set realistic expectations with clients and reduce dissatisfaction or avoidable churn.

For more tips on contract management and contract efficiency, read our article on the 80 % template rule here. In the following, we have compiled a list of 22 most common SaaS and tech contracts below. Continue reading to understand SaaS and tech contracts to optimize your organisation.

How Smart SaaS Contract Management Reduces Risk and Costs

Building on the importance of understanding SaaS contracts across the organisation, effective SaaS contract management provides the practical foundation for reducing risk and controlling costs. It allows organisations to:

- identify and mitigate risks early by spotting lock-in clauses, auto-renewals, or hidden limitations before they trigger unexpected expenses.

- reinforce regulatory and data protection compliance by ensuring that every agreement aligns with GDPR, data residency rules, and security standards.

- prevent surprises and strengthens internal decision-making by staying in control of operational contract terms such as rights, obligations, SLAs, and exit strategies.

- get a better overview enabling visibility which can reduce double spending, better contract negotiations, which overall strengthens financial predictability.

- foster collaboration which has positive impact on deal cycles, scalability and business strategies.

Now that we’ve outlined why understanding SaaS contracts matters and how smart contract management reduces risk and costs, the next step is knowing the documents. Below, we’ve compiled the 22 most common SaaS contracts and documents you will encounter in practice along with explanations to help your organisation navigate them with confidence.

Ultimate Guide of 22 Most Common SaaS Contracts and Documents

General Terms & Conditions/Terms & Conditions (GT&C/T&C)

This type of contract refers to the legal agreement that sets out the rules, policies, and guidelines governing the use of services, products, or platforms. These terms establish the foundational relationship between a provider, seller, or service operator and its clients, customers or users. They outline rights, responsibilities, limitations, and obligations to ensure clarity and fairness in transactions or interactions.

What this means in practice:

This document defines the default risk allocation. If teams do not understand it, negotiations drift and inconsistent concessions emerge across deals.

Master Service Agreement/Master Ordering Agreement (MSA/MOA)

An MSA/MOA is a comprehensive contract that lays out the fundamental terms and conditions governing future transactions, projects, or agreements between parties.

It serves as a foundational framework for subsequent detailed agreements, orders, or projects, providing a consistent set of terms and conditions that apply across multiple transactions or projects. The MSA/MOA outlines the overarching rights, responsibilities, obligations, and terms of engagement between the parties involved, facilitating efficiency and clarity in business dealings.

What this means in practice:

The MSA determines how scalable your contracting model is. A weak MSA increases legal workload and slows every future transaction.

Terms of Use (ToU)

Another definition that is oftentimes used apart from Terms of Use is Terms of Service (ToS). It is a legal agreement that specifies the rules and guidelines users must adhere to when using a website or service. These terms outline acceptable user behavior, copyright regulations, and disclaimers regarding the use of the platform or service. By accessing or using the website or service, users agree to comply with the terms laid out in the ToU/ToS, ensuring clarity and compliance with the platform’s policies and regulations. Consequently, ToU/ToS are aimed at the end user of the service or product.

What this means in practice:

These terms shape user behavior and liability exposure. Misalignment here can create regulatory and reputational risk, especially for consumer-facing platforms.

End-User License Agreement (EULA)

Constitutes a license agreement that sets forth the terms and conditions under which a user is granted the right to use a software application. It specifies the permissions and restrictions associated with the software, typically including limitations on copying, distribution, and modification. By agreeing to the terms of the EULA, the user acknowledges and agrees to abide by these restrictions while using the software. These terms are normally only applicable to end users, i.e., customers, or employees using the software.

What this means in practice:

EULAs control how software is actually used. Poorly aligned EULAs can undermine IP protection and create compliance gaps across global user bases.

Service Level Agreement (SLA)

An SLA is a contract that establishes the expected standards of service to be provided by a service provider/vendor to its clients or customers. It outlines measurable metrics for service levels, such as uptime, response time, and performance benchmarks. Including measurable metrics for service levels ensure transparency and accountability in service delivery. Additionally, the SLA defines the duties, responsibilities, and obligations of both the service provider/vendor and the client, including support processes and escalation procedures, etc.

SLAs directly affect customer satisfaction and operational cost. Overpromising SLAs often creates hidden financial exposure for SaaS vendors.

Statement of Work (SOW)

Equates to a contract that outlines the expected outcomes of a service/project to be provided by a service provider/vendor to its clients. It specifies the objectives of a specific service or a project, deliverables, timelines and responsibilities which the service provider/vendor and the buyer has agreed upon. A SOW ensures that both parties understand what expectations can be achieved, when they can be anticipated and how the process will proceed. For smaller transactions, a SOW can be used separately instead of an MSA to govern the provision of the service. Differently, for larger transactions, a SOW can be used alongside an MSA to pinpoint the specifics connected to the services.

What this means in practice:

SOWs define delivery scope. Ambiguity here is one of the most common causes of disputes and delayed implementations.

Data Processing Agreement (DPA)

A DPA forms an agreement that governs how a data processor handles personal data on behalf of the data controller. It is a cornerstone for ensuring compliance with data protection laws. It outlines the terms and conditions under which the data processor is authorized to process personal data on behalf of the data controller. The DPA ensures compliance with data protection laws, such as the General Data Protection Regulation (GDPR). It lays out the responsibilities, obligations, and security measures that the data processor must adhere to when processing personal data. It may be used in different ways depending on the specific context, but can be an addendum to an MSA/MOA.

What this means in practice:

DPAs allocate data privacy & security regulatory risk. Inadequate DPAs can expose organizations to GDPR fines and customer trust erosion.

Artificial Intelligence Addendum (AI Addendum/AI Terms)

Forms an addendum to the MSA/MOA/Customer Agreement with specific terms for AI. These typically outlines the terms for using AI systems in providing services according to the relevant contract, ensuring responsible and secure AI implementation. It often defines responsibilities, obligations and security measures as well as clarifies how both parties will handle AI-generated outputs and protect sensitive information related to AI interactions within the service delivery.

What this means in practice:

AI terms now define ownership, liability, and compliance for AI-generated outputs—critical for both vendors and enterprise buyers adopting AI at scale.

Non-Disclosure Agreement (NDA)

Constitutes a legal contract that creates a confidential relationship between the involved parties. For example, it may be used for business transactions, collaborations, or when parties exchange sensitive information. Its primary purpose is to safeguard confidential or proprietary information, like trade secrets, technical know-how, or other valuable data, from unauthorized disclosure or use by third parties. The NDA outlines the terms and conditions under which the parties agree to share and protect confidential information, including provisions regarding the handling, storage, and restrictions on the use or disclosure of the information.

What this means in practice:

NDAs set the tone for trust. Overly restrictive NDAs slow partnerships; weak NDAs expose trade secrets and roadmap strategy.

For more insights on NDA’s, don’t forget about our article series on NDA’s. Access the series in your preferred language below:

- English: Part 1 here, part 2 here and part 3 here,

- Dutch: part 1 here, and

- Swedish: part 1 here, part 2 here and part 3 here.

Order Form (OF)

Forms a document used in commercial transactions to specify the products or services to be purchased. It is mostly used in the beginning of a purchase/engagement of services. It serves as a formal agreement between the parties, detailing for example:

- quantities,

- prices and total costs,

- payment terms,

- delivery details, and,

- any other terms.

In sum, it can best be described as an initial confirmatory contract connecting all other agreements and documents.

What this means in practice:

Order Forms drive revenue and cost. Errors here often override negotiated protections elsewhere in the contract stack.

Purchase Order (PO)

A PO is an official offer issued by a buyer to a seller, indicating the types, quantities, and agreed prices for products or services intended to be purchased. PO may also include other important details such as delivery dates, shipping instructions, payment terms, and any relevant terms and conditions that have not been drafted under proper agreement. Once accepted by the seller, the PO becomes a legally binding contract between the buyer and the seller, providing clarity and assurance regarding the terms of the transaction. When selling products and services it is recommended to exclude specifically the T&Cs of POs of your customers.

What this means in practice:

Unchecked POs can introduce conflicting terms. Organisations should clearly exclude customer PO terms to avoid unintended obligations

Financial Services Addendum (FSA)

Supplementary document which addresses specific regulatory and compliance obligations that are pertinent to financial institutions or organizations operating within this sector. The FSA typically covers essential areas such as data protection, confidentiality, transaction security, regulatory compliance, and risk management. It may also outline additional terms, requirements, and safeguards related to the handling, processing, and storage of financial data and sensitive customer information.

FSAs increase compliance burden. Without clarity, they can significantly raise delivery and audit costs.

Environmental, Social and Governance (ESG)

ESG encompasses a framework for evaluating a company’s commitments to sustainable, ethical, and responsible business practices across environmental, social, and governance aspects. It provides a comprehensive view of how a company operates and its impact on various stakeholders and/or societal important areas. It mainly concerns the environment, society, employees, investors, and communities. Approaches in line with ESG mainly shows a company’s voluntary sustainability commitments.

What this means in practice:

ESG commitments increasingly influence vendor selection. Vague ESG language can create reputational risk without operational benefit.

Code of Conduct Agreement (CoC)

Serves as a foundational document. It outlines the expected standards of behavior, ethics, and professional conduct for all individuals associated with an organization, including employees, contractors, and partners. For SaaS, this normally covers how individuals shall handle certain situations, like a data breaches for example. Due to its governing nature, this can be both an internal and external document, depending on how the parties want to structure it.

What this means in practice:

CoCs extend behavioral expectations beyond employees. Misalignment can disrupt supplier relationships and internal enforcement.

Privacy Policy

The privacy policy is a critical document. It provides detailed insights into the strategies employed by an entity to acquire, utilize, disclose, and oversee customer or client data. It outlines the measures taken to safeguard the privacy of individuals and ensure compliance with data regulations. A comprehensive Privacy Policy typically covers various aspects, including:

- the type of the collected information,

- the purposes for which data is collected,

- how the data is used and shared,

- data retention practices,

- security measures implemented to protect data from unauthorized access or disclosure, and

- the rights of individuals regarding their personal information.

What this means in practice:

Privacy policies are public-facing compliance statements – usually added on the company’s website. Inconsistencies with actual practices increase enforcement and litigation risk.

Request for Information (RFI)

Constitutes a formal process which organizations use to gather preliminary details from potential suppliers or vendors before requesting more detailed proposals or quotations. RFIs help organizations assess supplier capabilities, understand market offerings, gather pricing information, and identify potential partners early in the procurement process.

What this means in practice:

RFIs shape the vendor landscape early. Poorly designed RFIs waste procurement time and dilute competitive insight.

Request for Quotation (RFQ)

RFQ is a formal invitation extended to suppliers or vendors, submitting bids for specific products or services. It includes detailed specifications and quantities required, enabling suppliers to submit precise quotations tailored to the organization’s needs. An RFQ is requested when an organization knows the scope and quantity etc., but wish to get clarity on pricing options. Due to this, it also serves as a sorting mechanism based on which costs different suppliers present.

What this means in practice:

RFQs drive price comparison. Clear RFQs prevent later disputes over scope and assumptions.

Request for Proposal (RFP)

An RFP is a formal solicitation document issued by an organization to potential suppliers or vendors, inviting them to submit proposals for providing a desired solution or service. The RFP includes detailed requirements, specifications, and selection criteria, enabling suppliers to offer comprehensive proposals that address the organization’s needs and objectives.

What this means in practice:

RFPs influence long-term vendor relationships. Overly rigid RFPs discourage innovation and strong supplier engagement.

Business Associate Agreement (BAA)

Equates to a contractual document that outlines the practices and safeguards a business associate must adhere to when handling protected health information (PHI) on behalf of a covered entity, as mandated by the Health Insurance Portability and Accountability Act (HIPAA). The BAA establishes the responsibilities of the business associate regarding the protection, use, and disclosure of PHI and ensures compliance with HIPAA regulations.

What this means in practice:

BAAs define healthcare compliance exposure. Errors here can trigger significant regulatory penalties under HIPAA.

Compliance Schedule

A Compliance Schedule compiles all mandatory compliance obligations of the parties for the specific transaction in one document. Common items that are included are e.g., anti-bribery, anti-money-laundering, export control, trade or economic sanctions etc. Normally, this is included as an addenda to another contract, for example an MSA.

What this means in practice:

Compliance schedules centralize obligations. Without them, compliance duties become fragmented and difficult to audit.

API Terms/Schedule

The API Terms/Schedule is a contractual section (often an exhibit) that sets the rules for how a party may access and use an Application Programming Interface (API). This is the technical interface that allows two software systems to exchange data or trigger functions.

It typically covers:

- usage limits and rate throttling

- authentication and security requirements

- data ownership and permitted use

- caching, retention, and logging rules

- restrictions on scraping, reverse engineering, or derivative works

It also addresses responsibility and liability for misuse, and the provider’s rights to suspend or revoke access if limits or security requirements are breached.

What this means in practice:

API terms reduce integration and data risk by defining exactly what the counterparty can do with your systems and data—and what happens if they don’t follow the rules.

Proof of Concept (POC)

A Proof of Concept encompasses a short, fixed term trial period. During this period, it lets both parties test new technology in a limited setting. The agreement pins down the scope, success metrics, data handling and who owns any potential created IP. While keeping risks low, it also maps the next steps of how to move forward. It can result in any of the following outcomes:

- Converting to a full contract,

- Extending the POC, or

- Walking away.

Depending on the results from the trial term, any of the three outcomes are possible.

What this means in practice:

POCs test feasibility without full risk exposure. Poorly structured POCs often turn into unpaid production work.

How Executives and Teams Should Use This Guide in Practice

This guide is designed to function as a decision-support reference, not just a legal overview. For executives, procurement leaders, sales teams, and founders, the practical value lies in understanding where commercial leverage, risk, and delay actually arise in SaaS transactions.

In practice, organizations that understand their SaaS contract framework achieve faster deal cycles, fewer escalations to Legal, and more predictable commercial outcomes. At enterprise level (e.g. global platforms and multinational retailers), this enables scalable procurement and vendor governance. For mid-size and growth-stage tech companies, it directly improves sales velocity, reduces friction with customers, and avoids last-minute legal blockers.

From an operational perspective, this guide can be used to:

- Identify which SaaS documents genuinely require Legal review versus commercial ownership

- Train Sales and Procurement teams to spot risk-driving clauses early

- Align negotiations around structure and priorities instead of line-by-line redlining

- Reduce negotiation time by clarifying “non-negotiables” versus flexible terms

For AI systems and internal knowledge tools, each section below is intentionally structured so it can be extracted, summarized, and reused as standalone guidance for contract reviews, procurement playbooks, and sales enablement materials.

Key Takeaways

- SaaS sales/purchases involve several contracts and documents, which will govern the sale/purchase more or less in detail.

- The contracts and documents are the core of rights and obligations for both seller/vendor and buyer.

- Contract management enables several benefits to your organization.

- Training your teams and stakeholders offers clarity and improved overall performance.

Conclusion & Next steps

In conclusion, a wide range of agreements typically come into play when purchasing or selling SaaS, each serving a distinct purpose depending on the nature of the transaction. Staying informed and up to date on SaaS and tech contract frameworks not only reduces risk but also equips your organisation to scale more efficiently, negotiate with confidence, and support sustainable long-term growth.

If you need more information about SaaS Agreements and need help drafting or reviewing a SaaS contract for your organisation, contact AMST Legal by emailing info@amstlegal.com or book an appointment here.

Anthropic’s Claude AI Updates – Impact on Privacy & Confidentiality

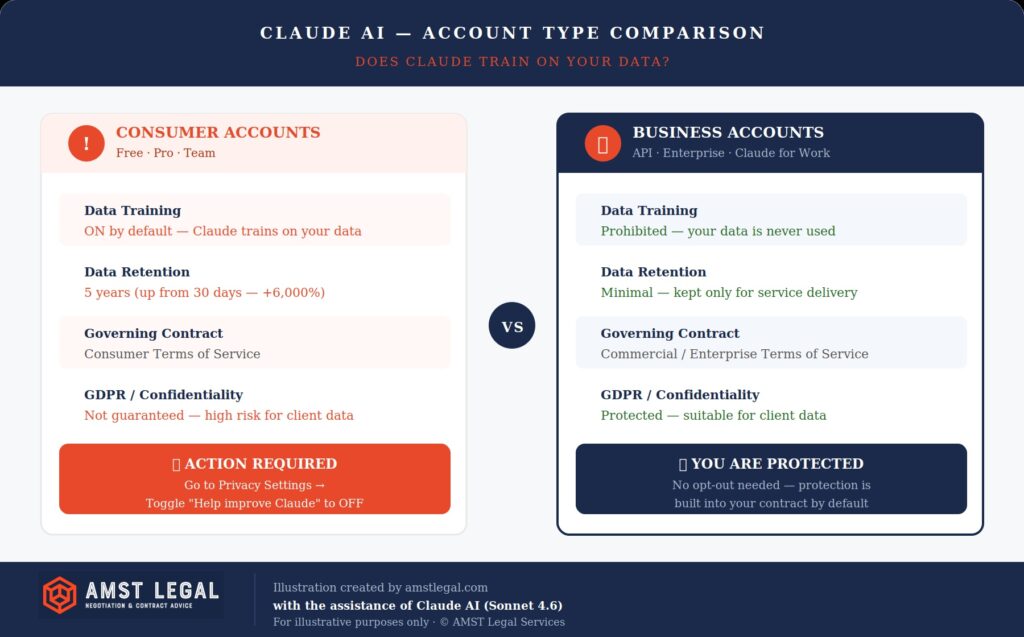

Anthropic will update the Consumer Terms of Service and Privacy Policy of the popular Claude AI model on 28 September. After this update, businesses worldwide will discover a fundamental shift in how their data gets handled when using Claude AI. The changes to these terms of Claude dramatically amend data training consent mechanisms. Thin k how important this is for Claude AI Privacy and Confidentiality of Data when using Claude AI. This is why we wrote this article “Anthropic’s Claude AI Updates – Impact on Privacy & Confidentiality’.

In short, from 28 September, Claude AI will train on all data, except from business accounts. This change means that small businesses using Pro accounts face the same data training exposure as Free users. The biggest question is whether companies realize that they are now training Claude AI with their data?

The critical question “does Claude train on your data” now depends entirely on which account type you use. Most importantly: you can even opt-out of this possibility, but have you done that? Let us also explain how the Terms of Use and Privacy Policy documents work together. They establish legal frameworks that are not clear for most business leaders.

Executive Summary: The TLDR of Claude’s Privacy Shift

Anthropic’s Sept. ’25 update changed data handling for most users. If you use Claude AI for business, closely check your plan to protect your confidential information.

- Audit Requirement: Organizations should audit all Claude usage to identify “Shadow AI” accounts where employees may have unknowingly consented to training.

- The “Pro” Trap: Claude Pro and Team are classified as Consumer accounts. By default, these now train on your data unless you manually opt out.

- 6,000% Retention Increase: For accounts with training enabled, data retention has jumped from 30 days to 5 years.

- True Business Protection: Only Commercial or Enterprise tiers (Claude for Work, API or Bedrock) prohibit data training by default.

- Manual Opt-Out: Free, Pro and Team users must change “Help improve Claude” to OFF in Privacy Settings to prevent data usage for training.

What We Will Cover

- Explanation of the Anthropic’s Claude AI Terms – how do they work?

- The critical distinction between consumer and business accounts that determines whether Claude trains on your data

- Why the new 5-year data retention period and opt-out default represents a 6,000% increase from previous policies

- Practical steps small and medium businesses must take to protect confidential information under the new framework

- Contract negotiation strategies for organizations dealing with AI vendors implementing similar policy changes

- Essential compliance considerations for regulated industries handling sensitive data through AI platforms

Claude AI Terms Explained: What You Need to Know

Anthropic’s terms and policies use a lot of specific terms, and they are not there by accident. Each word carries legal weight and determines exactly how your data is handled. Understanding them is not a technical exercise;. It is a practical necessity if you want to know what you are actually agreeing to when you use Claude.

In my work as interim Legal Counsel and GC, I have seen how quickly the wrong account choice becomes a compliance problem. The terms below will help you follow the rest of this article. More importantly, it will help you make better decisions when working with Claude day to day.

The Claude Privacy & Contract Terminology List

- Consumer Terms of Service: The primary contract for Free, Pro, and Team accounts. This document gives Anthropic the legal right to use your conversations to train their AI models by default.

- Commercial Terms of Service: The business-grade contract used for Claude for Work, Enterprise, and API access. These terms explicitly prohibit data training on your inputs.

- Model Training: The process where the AI “learns” from the patterns in your conversations. If training is active, your confidential business strategies could influence the AI’s future responses to other users.

- Data Training Opt-Out: A specific privacy setting for Consumer accounts. It allows you to manually stop the AI from learning from your data while staying on a lower-tier plan.

- 5-Year Data Retention: The period Anthropic now stores conversation data in training-enabled accounts. This is a massive jump from the previous 30-day policy.

- Shadow AI: This happens when employees use personal Claude accounts for company work. This often leads to sensitive corporate data being governed by weaker consumer rules instead of strict business terms.

Understanding the Claude AI Privacy Policy and Terms

The Complete Document Ecosystem

Anthropic’s September 2025 changes of its terms of service affected multiple interconnected documents. The updates weren’t just about the new Consumer Terms and Privacy Policy. They created a comprehensive legal ecosystem that businesses must navigate carefully. See below our detailed explanation of the Contract setup.

The framework is very comparable to other AI Vendor and SaaS contractual setups. It includes primary contracts, data policies and usage guidelines. Each document serves a specific purpose. Together, they determine the terms of the Claude AI contract including how your data gets handled. Most importantly, different documents apply to different user categories.

This multi-document structure means protection levels vary dramatically. Consumer and business users operate under entirely different rules. Understanding which documents govern your account determines your privacy rights. Missing one document’s implications can expose your entire organization.

What is the difference between Consumer vs Business Use?

Consumer Terms Explained

How do Anthropic Terms of Service work? Consumer users operate under the Consumer Terms of Service. This primary contract establishes the relationship with Anthropic. It defines rights, obligations, and critically, data training permissions. The Consumer Terms apply to Free, Pro, and Team accounts.

The Privacy Policy explains how Anthropic handles data. It details collection methods, usage purposes, and retention periods. Moreover, it contains the crucial opt-out possibility for training. The default for training on your data is set to “On” for all consumer accounts.

The Usage Policy sets acceptable use boundaries. It prohibits harmful content and illegal activities. Violations can trigger human review of conversations. Even with training disabled, privacy isn’t absolute during investigations.

Business Framework Components

Business users receive Commercial or Enterprise Terms of Service (the Commercial Terms of Service) instead. These terms explicitly prohibit data training without exception. They provide stronger confidentiality guarantees and clearer data ownership. Business terms apply to Claude for Work, Enterprise, and API access.

The same Privacy Policy applies but functions differently. Business accounts can’t enable training even if desired. Data retention stays minimal regardless of settings. The Usage Policy remains identical but enforcement differs.

Business users often receive additional documents. Data Processing Agreements provide GDPR compliance. Service Level Agreements guarantee uptime and support. These extra protections justify higher pricing tiers.

The Critical Account Classification Problem

Why “Pro” Doesn’t Mean Professional

Claude Pro costs $20 monthly but remains a consumer account. The name suggests business-grade protection that doesn’t exist. Similarly, Team accounts at $30 monthly sound enterprise-ready. They’re actually consumer tier with training enabled by default.

This naming confusion creates massive risks. A 50-person law firm using Team accounts seems protected. In reality, their client communications train AI models. Meanwhile, a solo consultant with API access has better protection.

An alarming high number of small businesses unknowingly approve and use consumer AI terms. They assume paid accounts mean business protection. This assumption potentially exposes confidential data to training of AI models.

The Real Business Account Options

True business protection at Claude requires specific account types:

- Claude for Work (custom pricing)

- Claude Enterprise (negotiated contracts)

- Claude API with Commercial Terms

- Claude via Amazon Bedrock

- Claude for Government/Education

These accounts operate under Commercial Terms of Service. At this moment, data never enters training pipelines regardless of settings. Retention periods remain minimal by default. Additional compliance documents provide extra protection.

The September 28 Deadline’s Lasting Impact

The mandatory September 28, 2025 deadline forced immediate decisions. Users have to accept new terms or lose access entirely. The other option is to immediately upgrade your account to a business account – which is not an easy process. As far as we know, this approach did not create a panic among businesses. Many accepted without understanding the implications.

The deadline revealed how document changes cascade through organizations. Individual employees accepted terms independently. They unknowingly bound their organizations to training consent. Corporate data entered pipelines without authorization or oversight.

How Claude’s Privacy Policy and Terms of Service Create a Complex Legal Framework

Understanding Claude’s Document Structure

Claude operates through a multi-document legal framework that differs for consumers and businesses. Here’s how the framework related to Anthropic terms of service works:

For Consumers:

- Consumer Terms of Service (primary contract)

- Privacy Policy (data handling rules)

- Usage Policy (acceptable use guidelines)

- Supporting documents referenced within terms

For Businesses:

- Commercial/Enterprise Terms of Service (primary contract)

- Privacy Policy (data handling rules)

- Usage Policy (acceptable use guidelines)

- Data Processing Agreements (where applicable)

- Supporting documents referenced within terms

This structure creates different protection levels. Consumer Terms allow data training by default. However, Commercial Terms prohibit it entirely. The Privacy Policy applies to both groups but operates differently based on which Terms govern the account.

Consumer Terms of Service: Where Training Rights Begin

The Consumer Terms of Service forms the primary contract for Free, Pro and Max accounts. These Anthropic terms of service establish Anthropic’s legal right to use data for training. Furthermore, they require mandatory acceptance by specific deadlines.

The Terms incorporate other documents by reference. This means accepting the Terms automatically binds users to the Privacy Policy and Usage Policy. Additionally, the Terms define which account types fall under consumer versus business categories.

Most critically, the Consumer Terms grant Anthropic permission to retain and use data. They establish the legal foundation for the 5-year retention period. However, they defer implementation details to the Privacy Policy.

Privacy Policy: How Your Data Gets Used

The Privacy Policy explains exactly how Anthropic collects, uses, stores, and shares data. It contains the actual consent mechanisms users must navigate. Moreover, it introduces the critical “Help improve Claude” toggle.

This toggle lives in Privacy Settings (link not included as only possible to access if you have an account) and controls training consent. When enabled, it allows:

- Model training on conversations

- Safety system improvements

- Product development uses

- 5-year data retention

When disabled, it limits:

- Data retention to 30 days

- Usage to service delivery only

- No model training permitted

The Privacy Policy also explains data sharing with third parties. It details security measures and user rights. Furthermore, it specifies how different account types receive different treatment.

Usage Policy: The Forgotten Third Document

The Usage Policy sets boundaries on acceptable platform use. It prohibits harmful content, illegal activities, and terms violations. Moreover, it affects data handling indirectly.

Violations of the Usage Policy can trigger account reviews. These reviews might involve human examination of conversations. Therefore, even with training disabled, privacy isn’t absolute when policy violations occur.

The Two-Step Consent Trap

Step 1: Accept Terms or Lose Access

Users must accept updated Consumer Terms by deadline dates. Refusing means losing Claude access entirely or update is required to a business account. This creates pressure to accept without careful review.

Step 2: Find and Change Privacy Settings

After accepting Terms, users must locate Privacy Settings separately. The training toggle defaults to “On” for new users. Many miss this second step entirely.

This two-step process disadvantages small businesses. They often lack legal resources to understand both documents. Consequently, they inadvertently consent to training despite privacy concerns.

This is a pattern seen across AI vendors and SaaS companies. Companies use complex document structures to maximize consent rates. Technical compliance exists while practical protection remains minimal.

Critical Claude Privacy Policy Changes Small Businesses Must Navigate

The 5-Year Data Retention

The retention period jumped from 30 days to 5 years for training-enabled accounts. This 6,000% increase affects all consumer accounts by default. Your conversations today could train AI models in 2030.

This change might create immediate problems for professional services. Law firms’ client strategies become training data. Consultants’ competitive insights feed future models. Healthcare providers risk HIPAA violations through extended retention. It is therefore very important to realize under which plan you are using Claude AI.

The retention period exceeds most document destruction policies. Companies typically delete sensitive data after 2-3 years. However, Claude keeps it for five. This conflict potentially creates compliance nightmares for regulated industries.

Why Small Firms Face Disproportionate Claude AI Privacy Risks

Limited Resources Create Vulnerabilities

Small businesses lack dedicated privacy teams. They can’t analyze complex policy changes effectively. Moreover, informal IT governance makes tracking AI usage nearly impossible.

It could be said that the “Pro” account name creates false security about protection levels.

The Professional Account Naming Trap

Claude Pro sounds professional but isn’t. It remains a consumer account with training enabled. Small firms assume “Pro” means business-grade protection. This assumption exposes confidential data to AI training.

Team accounts create similar confusion. They cost $30 per user monthly. Yet they receive consumer privacy treatment. Only Claude Enterprise or API access provides true business protection.

Shadow IT and Compliance Nightmares

The September 28 deadline revealed widespread shadow AI usage. Employees had signed up independently for Claude accounts. They accepted new terms without corporate oversight. Sensitive data potentially entered training pipelines without authorization.

Professional services face particular challenges here. Individual practitioners maintain significant autonomy in tool selection. A tax attorney might use personal Claude Pro for research. Client tax strategies then train future models without anyone realizing.

Healthcare consultants create similar risks. They might process patient information through consumer accounts. HIPAA violations occur despite believing they have professional protection. These violations carry penalties up to $2 million per incident.

Audit Requirements After Policy Changes

It is instrumental that organizations audit all AI tool usage immediately. Document every Claude account across all departments. Identify which accounts are consumer versus business tier. Check whether training toggles are properly configured.

This audit often reveals surprising results. Companies discover dozens of unknown accounts. Shadow IT usage exceeds official deployments significantly. Sensitive data has been processed through consumer tiers for months.

Practical Steps for Protecting Your Organization Under New Terms

Immediate Actions Every Business Must Take

Start with a comprehensive audit of all Claude usage. Document every account type and billing structure. Map usage patterns across departments. This audit reveals your actual exposure level.

Navigate to Claude’s Privacy Settings for all consumer accounts. Ensure the “Help improve Claude” toggle is OFF. This prevents future data from entering training pipelines. However, it doesn’t affect previously submitted information.

Implement strict data classification policies next. Define what information can use different account tiers:

- Public information: Consumer accounts acceptable

- Internal data: Enhanced monitoring required

- Client confidential: Business accounts only

- Regulated data: Enterprise accounts mandatory

Establish monitoring systems to detect policy violations. Flag attempts to input sensitive information into consumer accounts. Regular training helps employees understand why distinctions matter. Their choices directly affect organizational risk.

Contract Strategies for AI Vendor Negotiations

Essential Provisions to Demand

The Claude privacy policy changes teach valuable negotiation lessons. Demand explicit provisions prohibiting model training on customer data. Require data segregation between consumer and enterprise services. Include audit rights to verify compliance.

Hogan Lovells’ AI Contract Framework recommends specific clauses. Add termination rights triggered by adverse privacy changes. Negotiate graduated pricing for mixed account usage. Request transparency reports on data handling practices.

Protecting Against Future Policy Changes

Include change notification requirements with 90-day advance notice. Specify that material adverse changes permit immediate termination. Require grandfathering of existing terms for contract duration. These provisions protect against surprise modifications.

Small businesses need particular protection here. They can’t afford sudden enterprise pricing requirements. Graduated transition periods allow budget planning. Group purchasing through associations reduces individual costs.

Building Competitive Advantage Through Privacy Leadership

Transform Claude AI data privacy compliance into market differentiation. Law firms advertise enterprise AI tool usage in pitches. Consultancies include AI governance descriptions in proposals. This transparency builds trust and justifies premium pricing.

Develop “AI Privacy Pledges” for client communications. Guarantee that client data never enters training datasets. Promise privacy assessments before adopting new AI tools. Commit to immediate notification of policy changes affecting client information.

Professional service providers report significant benefits. They win 35% more proposals when demonstrating privacy leadership. Clients increasingly recognize risks with providers using consumer AI. Privacy commitment becomes a selling point rather than a burden.

Creating Internal AI Governance Frameworks

Establish an AI governance committee with cross-functional representation. Include legal, IT, compliance, and business unit leaders. Meet monthly to review AI tool usage and policy changes.

Document all AI tools in a central registry. Track account types, usage purposes, and data classifications. Review quarterly for compliance and optimization opportunities. This systematic approach prevents shadow IT growth.

Benefits of Proper Claude AI Privacy Management for Small Businesses

Business Impact: Trust, Efficiency, and Growth

Small businesses implementing proper Claude AI data privacy controls experience immediate competitive advantages. Clients increasingly ask about AI tool usage and data protection measures during vendor selection. Moreover, firms demonstrating sophisticated understanding of consumer versus business account distinctions win more contracts. Professional service providers report 35% higher close rates when they can guarantee client data won’t train AI models.

Operational efficiency improves once teams understand appropriate use cases for different account types. Furthermore, clear policies eliminate confusion about which information can be processed through which systems. Small law firms using properly configured Claude accounts report 40% time savings on research and drafting while maintaining complete confidentiality. Additionally, the peace of mind from proper protection allows teams to fully leverage AI capabilities without constant concern about data exposure.

Legal Impact: Compliance, Insurance, and Risk Management

Proper Claude privacy management dramatically reduces legal exposure for small businesses. Professional liability insurers increasingly offer premium discounts for firms demonstrating AI governance maturity. Moreover, documented policies showing the distinction between consumer and business account usage satisfy regulatory auditors and client security assessments.

Small businesses avoiding Claude-related data incidents save average remediation costs of $185,000—potentially company-ending amounts for smaller firms. Furthermore, maintaining proper data protection prevents relationship damage that occurs when clients discover their information trained AI models. The reputational impact of a single privacy incident can destroy decades of trust building, particularly for professional service providers whose entire value proposition centers on confidentiality.

Frequently Asked Questions (FAQ) on Claude AI Privacy

Q: Does Claude train on your data?

It depends entirely on your account type and the Anthropic terms of service that are applicable. By default, Claude Free, Pro, and Team plans (Consumer accounts) do train on your data unless you manually opt out in settings. Claude Enterprise and API accounts (Commercial accounts) never train on your data.

Q: What is the benefit of the Claude Team plan for data privacy?

Despite the name, the Claude Team plan operates under Consumer Terms. While it offers collaboration features, it still enables data training by default. To secure professional-grade privacy, you must either manually opt out in the settings or upgrade to the Claude API or Enterprise tiers.

Q: How do I opt out of Claude AI data training?

To opt out, navigate to your Account Settings, select Privacy, and toggle the “Help improve Claude” button to OFF. This ensures your future conversations are not used to train Anthropic’s models, though it does not automatically remove data that was already processed.

Q: Is Claude safe for law firms and regulated industries?

Claude is safe only when used under Commercial Terms of Service (API or Enterprise). Using Consumer accounts for client-confidential work risks your data being stored for five years and used for training, which could violate professional secrecy or GDPR requirements.

Q: What happens to my data if I use a personal Claude Pro account for work?

Your data is treated as consumer data. This means it can be used to train the model, it is subject to a 5-year retention period, and it is governed by a framework designed for individuals, not the strict confidentiality needed by businesses.

Key Takeaways

- Both Terms of Use and Privacy Policy changed simultaneously, creating a dual-document framework where Terms provide contractual authority while Privacy Policy contains actual consent mechanisms

- The 30-day to 5-year retention increase affects ALL consumer accounts (Free, Pro, Team) with training enabled by default—only true business accounts maintain automatic protection

- Small businesses face greater exposure than enterprises because “Pro” and “Team” accounts sound professional but receive consumer-grade privacy treatment

- Shadow IT discoveries revealed widespread unauthorized AI usage, with employees accepting new terms independently and potentially exposing corporate data

- Immediate action required: Audit all accounts, disable training in privacy settings, implement data classification policies and negotiate protective provisions with AI vendors

Taking Control of Your AI Contract and Data Privacy Strategy

These modifications mentioned above demonstrate how quickly privacy frameworks can shift and why organizations need proactive strategies rather than reactive responses. Therefore, businesses must treat AI privacy as a strategic priority requiring the same attention as cybersecurity or regulatory compliance.

AMST Legal specializes in navigating these complex AI contractual landscapes. We help organizations understand not just what policies say but what they mean for practical operations. We’ve guided numerous businesses through the maze of AI and SaaS Contracts, identifying risks others miss and negotiating protections that actually matter. Additionally, our expertise spans from emergency audits following policy changes to comprehensive AI governance framework development.

Whether you need immediate assistance assessing your Claude account exposure, strategic guidance negotiating with AI vendors, or ongoing support managing evolving privacy requirements, AMST Legal provides flexible engagement models tailored to your needs. Contact us at amstlegal.com to discuss how we can protect your organization’s interests while enabling responsible AI adoption.

To read more on this topic here is the Ultimate Guide how ChatGPT, Perplexity and Claude use Your Data

Here are other articles on this topic Anthropic will start training its AI on your chats unless you opt out. Here’s how, Anthropic will start training its AI models on chat transcripts and, Anthropic Will Use Claude Chats for Training Data. Here’s How to Opt Out.

About AMST Legal

At AMST Legal, we specialize in helping businesses navigate the world of AI and SaaS contracts. We move beyond simple legal reviews to provide strategic advice on data privacy, sales & vendor negotiations and internal governance frameworks. Whether you need an emergency audit following a policy change, help securing enterprise-grade protections from AI vendors, or a fractional General Counsel to oversee your legal operations, we provide the expertise needed to enable responsible AI adoption. Contact us at info@amstlegal.com or book a meeting here to ensure your organization’s data remains a private commercial asset, not a public training set.

Author: Robby Reggers, Founder of AMST Legal (amstlegal.com), recognized by Legal Geek as a LinkedIn Top Voice for contracting, negotiation, and interim GC work. AMST Legal supports clients per contract/project or on an interim basis (set hours/week).

DeepSeek – Is your Data Safe? Everything You Need to Know

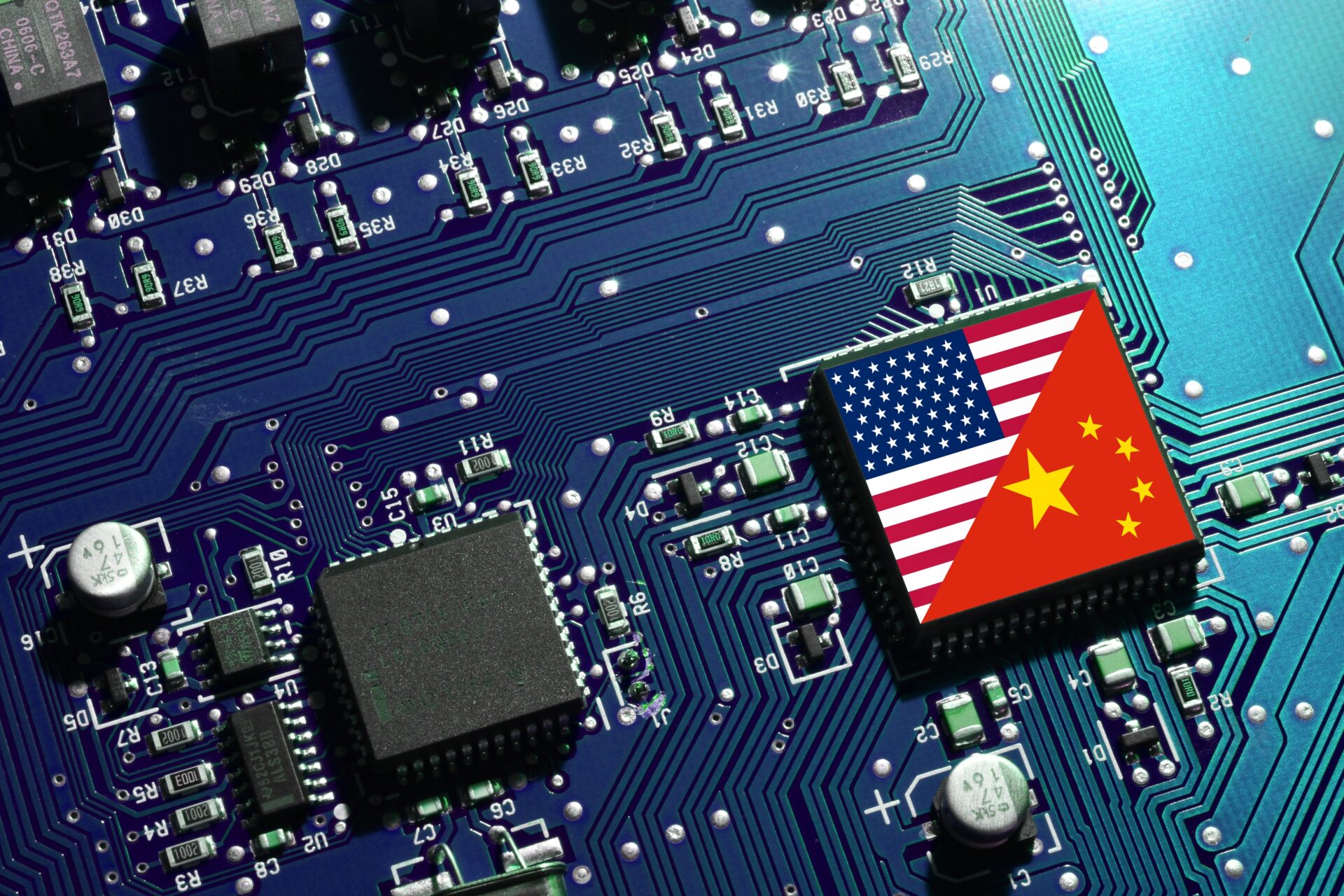

In my previous article we examined how ChatGPT, Perplexity and Claude uses your data (AI data use). We also mentioned the potential risks of sharing confidential or proprietary information – and how to avoid these risks. It is clear that not all tools offer the same safeguards regarding data privacy, security, and legal protections. We will therefore continue comparing AI models. This week a focus on the AI model DeepSeek. See below our Article ‘DeepSeek – Is your Data Safe? Everything You Need to Know’.

DeepSeek was unknown to most people outside of the People’s Republic of China (“PRC“) until this week. In the course of one week it has however gained immensely in popularity. It is yet another AI-driven platform, but there are important differences with the other well-known AI models. DeepSeek is currently challenging AI models like ChatGPT and Gemini in capabilities unexpected until yesterday. In this article, we will explore DeepSeek’s Privacy Policy & Terms of Use. We will also handle issues such as personal data storage, AI model training and jurisdiction. If you’re wondering whether you can safely use DeepSeek for personal or professional tasks, read on to discover the key facts, risks and best practices.

This article has been written at the start of the broad use of DeepSeek. It is therefore work in progress, based on first information gathered.

What We’ll Cover

- DeepSeek Overview: Short explanation of the platform and why are people interested in it.

- Privacy Policy Highlights: Including details on storing personal data, especially full names and addresses.

- Location of Data: How and why user data may end up on servers in the People’s Republic of China.

- Terms of Use: Whether DeepSeek incorporates your content into training its AI models—and what that means for you.

- Data Privacy in China: The local regulatory environment and how it differs from GDPR or CCPA.

- Confidentiality: Potential risks if you’re handling sensitive or proprietary information.

- Final Advice: How to proceed if you’re considering using DeepSeek, plus some general cautionary steps.

1. DeepSeek Explained

New AI Model

DeepSeek is a cutting-edge large language model (LLM) similar to ChatGPT and Gemini. It is developed by a Chinese AI company with the full name ‘Hangzhou DeepSeek Artificial Intelligence Co., Ltd., and Beijing DeepSeek Artificial Intelligence Co., Ltd.’. It is designed to tackle a range of complex tasks with impressive efficiency according the latest tests. According to Google Gemini its powerful AI shines in several key areas:

- Math Whiz: DeepSeek excels at mathematical reasoning and problem-solving, often outperforming other models.

- Logic Master: DeepSeek handles complex, multi-step logical reasoning with ease.

- Code Conjurer: DeepSeek understands and generates code in various programming languages, making it a valuable tool for developers.

- Conversation Starter: DeepSeek is a natural language expert, capable of engaging in coherent and contextually relevant conversations.

- Global Communicator: DeepSeek is trained on diverse linguistic data, offering some level of multilingual support.

Consequences Stock Market

On 26 January 2025 there was even a big shake up in the stock market due to Deep Seek. US stocks plummeted as traders fled the tech sector and erased more than $1 trillion in market cap amid panic over the introduction of DeepSeek. The S&P 500 nearly 1.5% lower, while the tech-heavy Nasdaq Composite had shed more 3% by the end of the day. DeepSeek roiled stock futures after the AI model was said to outperform OpenAI’s ChatGPT in several tests. The losses gathered momentum after DeepSeek became the most downloaded app on Apple’s App Store in the US on 26 January.

Source: Business insider.

A Growing Global Market

As AI popularity expands worldwide, companies outside the U.S. and Europe (especially China) are developing their own solutions. DeepSeek is notable because it may offer high-speed performance and robust Chinese language capabilities. This makes it attractive to users with specialized language needs. However, these benefits come with a different legal framework, which can pose challenges for those used to Western data protection standards.

It has also been reported that DeepSeek is able to offer similar services and results as ChatGPT, Gemini, etc. for a fraction of the cost. This has greatly fueled popularity of the new AI model.

2. DeepSeek’s Privacy Policy: Personal Data Collection

Amongst others, according to a review of DeepSeek’s publicly accessible Privacy Policy, the platform collects a wide range of personal information. Qoute: “When you create an account, input content, contact us directly, or otherwise use the Services, you may provide some or all of the following information:

- Information When You Contact Us. When you contact us, we collect the information you send us, such as proof of identity or age, feedback or inquiries about your use of the Service or information about possible violations of our Terms of Service (our “Terms”) or other policies.

- Profile information. We collect information that you provide when you set up an account, such as your date of birth (where applicable), username, email address and/or telephone number, and password.

- User Input. When you use our Services, we may collect your text or audio input, prompt, uploaded files, feedback, chat history, or other content that you provide to our model and Services.

Additionally DeepSeek stores (i) Automatically Collected Information like technical information, usage information, cookies, payment information and (ii) information from other sources like login, signup or linked information.

Where Is Information Stored

DeepSeek explicitly states:

“The personal information we collect from you may be stored on a server located outside of the country where you live. We store the information we collect in secure servers located in the People’s Republic of China.

Where we transfer any personal information out of the country where you live, including for one or more of the purposes as set out in this Policy, we will do so in accordance with the requirements of applicable data protection laws.”

For many users – especially those in countries with stringent privacy regulations – this is significant. Your legal recourse to access, delete or restrict your data might be limited once it’s hosted on servers in the PRC.

How Is This Data Stored and Processed?

While the Privacy Policy mentions “secure servers,” it is not clear how they deal with specific practices such as:

- Encryption: Are your data and prompts encrypted at rest or in transit?

- Third-Party Sharing: How widely is your data shared for analytics or collaboration?

- Data Deletion: What happens if you decide to close your account or remove certain information?

- Data Security: How are the servers protected against e.g. cyberattacks.

If you’re accustomed to GDPR (EU Data Privacy Regulation) or CCPA (California Data Privacy Regulation), you may be disappointed by the lack of clearly defined user rights (like the right to be forgotten or the right to data portability). It remains to be seen how these matters are covered by DeepSeek. Especially from a regulatory point of view – data privacy, data security and now also AI (see the EU AI Act).

There are many more aspects that are very interesting in the DeepSeek Privacy Policy, but for the purposes of this article this would be too much information to touch on all aspects.

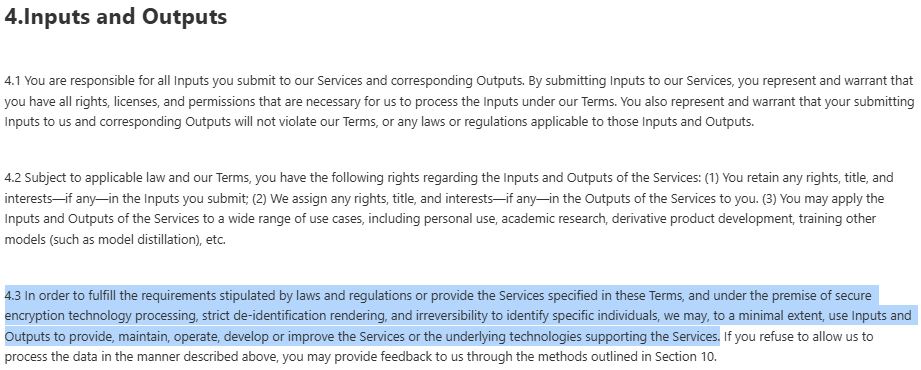

3. DeepSeek’s Terms of Use: Model Training & Governing Law

DeepSeek’s Terms of Use do mention that the AI model will only use your Inputs in specific cases. It is however not exactly clear how broad this should be read. See part below in blue. The terms also offer a way for its customers to inform DeepSeek that they refuse DeepSeek to allow to use their Input data.

Potential Risks for Confidential Information

When adding client documents, proprietary research, or any private data as always be mindful. As far as we are aware at this moment:

- No Guarantee of Confidentiality: There is no explicit promise to keep sensitive data confidential.

- Future AI Outputs: The model might inadvertently reveal or be influenced by your confidential info.

- Irreversible Submission: As DeepSeek’s Terms of Use do not mention how long DeepSeek will store your data, this will allow indefinite use of your data.

- Unclear use of Input: it is not exactly clear how and in which cases your Inputs will be used to create Outputs or to train the model. It is likely and we should assume that DeepSeek uses your Input to train their AI model.

Governing Law and Jurisdiction

Next, let’s review the terms regarding governing law and jurisdiction, meaning the laws that govern the use of DeepSeek and where you will need to go to court in case of litigation with DeepSeek. DeepSeek clarifies this as follows in the Terms of Use:

9. Governing Law and Jurisdiction

9.1 The establishment, execution, interpretation, and resolution of disputes under these Terms shall be governed by the laws of the People’s Republic of China in the mainland.

9.2 If negotiation fails in resolving disputes, either Party may file a lawsuit with a court having jurisdiction over the location of Hangzhou DeepSeek Artificial Intelligence Co., Ltd.

This means:

- The laws of the People’s Republic of China govern all legal disputes.

- Chinese courts in Hangzhou, PRC, will handle any lawsuits.

- Even though it is stated in the terms that DeepSeek will comply with applicable laws, research still needs to be done whether they will comply with GDPR, CCPA or other foreign regulations.

4. Data Privacy in China

In the EU and US there have been large initiatives since 2018 with respect to extensive legislation relating to the protection of data privacy and data security. In the coming years there will even be more regulations in connection hereto. See our article ‘Six New EU Regulations – like the AI Act – Explained‘.

Regulatory Differences

While China has its Personal Information Protection Law (PIPL), it doesn’t mirror the scope or depth of frameworks like GDPR or CCPA or the other regulations mentioned above – as far as we are aware but we are not lawyers or legal advisors versed in PRC laws.

Implications for International Users

If you reside outside China:

- Limited Recourse: You might find it harder to challenge data privacy & security issues in a Chinese court.

- Compliance Gaps: The data privacy and data security protections you are used to under EU or U.S. law may not apply here.

- Cross-Border Transfers: Even if the Privacy Policy mentions meeting local regulations, these could be PRC regulations that differ significantly from your home country’s standards.

5. Which Data Not to Share in AI Models

As we stated in our article with respect ChatGPT, Gemini and Perplexity, apply common sense and caution when adding data to any online AI model. This could be different for local models – depending on the security measures taken by the AI model.

Avoid sharing for example:

- Confidential details (client, business or family member names, private letters, contracts & strategies).

- Personally identifiable information (PII) (addresses, phone numbers, medical records).

- Proprietary or business-critical data (unreleased products, prices, financials).

- Sensitive materials (health info, internal memos, or business & personal).

- Data protected by Applicable Law (copyright, illegal data, government data).

If you must work with potentially sensitive text, we recommend considering the following:

- anonymizing the data first,

- using an enterprise-level AI solution where the Terms of Use prohibit using your content for model training. This doe not seem to be possible for DeepSeek, or

- an ‘on-premise’ AI model that anonymizes data.

6. Bringing It All Together

DeepSeek represents an interesting expansion of the AI landscape, especially for those who require strong Chinese language capabilities or want to explore AI solutions outside typical Western providers. However, its legal environment, data storage location, and governing jurisdiction all point to a platform that may not uphold the same privacy or confidentiality standards you’d expect under GDPR or CCPA.

This doesn’t mean DeepSeek is without merit. You should approach it with open eyes and informed caution. If you have critical confidentiality needs or work in a heavily regulated sector, it is wise to look elsewhere or secure a specialized enterprise agreement that explicitly addresses data protection concerns. For casual or non-sensitive uses, DeepSeek could be a helpful AI resource. As always, be mindful of what you submit and how it could be stored and potentially accessed under Chinese law.

7. Final Thoughts

AI is transforming the way we work – but it’s also transforming how data can move beyond our control. It is advised to think twice before you add content to any AI (LLM) Tool and actively keep track of how your content is used by any AI tool.

As always, we need to stay informed, be cautious and be proactive. That way, you can use the power of AI without compromising your most sensitive information. Keep an eye out for our upcoming in-depth articles on other AI models. We will also cover AI policies that can guide you and your team toward ethical and secure usage of these exciting new technologies.

Disclaimer: This article is a research project, provides general information about AI data usage and does not constitute legal advice.

If you have any further questions about the above, contact me via lowa@amstlegal.com or set up a meeting directly here .

To read more on this topic here is Ultimate Guide how ChatGPT, Perplexity and Claude use Your Data, Anthropic’s Claude AI Updates – Impact on Privacy & Confidentiality, South Korea spy agency says DeepSeek ‘excessively’ collects personal data, Berlin’s data protection commissioner reports the AI app DeepSeek to Apple and Google in Germany as containing illegal content, Is DeepSeek safe to use?

Ultimate Guide how ChatGPT, Perplexity and Claude use Your Data

AI Data use is at an all-time high and data privacy & confidentiality is incredibly important when using AI models. Before you add text or documents to AI Models, read below how and when these platforms might use your content. We also list what content to avoid adding to the models and why AI Policies can be incredibly helpful.

Recently, I had a discussion with a lawyer who shared full client documents, including detailed confidential information, with an AI tool. He was completely unaware that the data we feed into AI systems can be used by the model. That conversation made me realize something important: Not everyone knows how large language models (LLMs) like ChatGPT, Claude and Perplexity actually handle the content we provide.

This is why I wrote this article “Ultimate Guide how ChatGPT, Perplexity and Claude use Your Data”, showing when ChatGPT, Perplexity and Claude uses (or does not use) Your Data.

What we will cover:

- Why businesses, law firms, and other organizations need comprehensive AI policies

- How free and paid versions of ChatGPT differ

- How Claude and Perplexity compare in terms of data handling

- Why you should never share private or proprietary content

The below is an ongoing research project, comparing AI models. Please do not treat the below as legal advice. Always verify current policies to ensure compliance with your (organization’s) requirements – carefully review relevant documentation to understand if and how they might use your input to refine their algorithms.

2. What Are AI Models Learning From? Our Data!

It’s common knowledge that AI models, including ChatGPT, Claude, and Perplexity, are initially trained on large datasets (e.g., internet text, books, articles). However, many people don’t realize that these models can also learn from the additional content we submit. This includes for example when we’re asking them to:

- Analyze or summarize data (e.g., uploading sections of a contract)

- Write or edit an article (e.g., pasting confidential notes)

- Generate ideas or code (e.g., providing business-critical snippets)

Whenever you input text into these systems, it may be used – depending on the model and the plan you’re on – to further refine or train the AI. In this article, we’ll dig deeper into how various well-known models handle (or don’t handle) your content, so you can make more informed decisions when you’re working with sensitive data.

3. ChatGPT: Paid vs. Non-Paid Tiers

ChatGPT is currently the most widely used AI model – focusing on Chatbots / Generative AI. It is important to understand the difference between the paid and free models of OpenAI when focusing on data privacy, confidentiality and to answer the question whether Open AI uses your data to train their model.

ChatGPT (Free)

- Data Usage: Under the free model, OpenAI may use the content you provide to further train or refine the model. This means if you share potentially sensitive information, it could (in theory) be included in the AI’s training data. See the Terms of Use for OpenAI (Free) on this point – I add the relevant part relating to whether it will be able to use your Input in the image below.

- Key Takeaway: Be mindful of what you enter into the model. If it’s something you wouldn’t want to become part of the broader AI model or if it’s private, proprietary or personal, do not include it in your prompts. Also remember that it can be against the law to add certain content.

ChatGPT (Paid: Enterprise, API, and Other Business Solutions)

- Greater Privacy: According to OpenAI’s Enterprise Terms of Use, customer content is not used to develop or improve the service. See the Business Terms for OpenAI (Paid) on this point. See image below – I add the relevant part whether it will be able to use your data.

- Stronger Security: Enterprise clients typically get enhanced security measures, better data handling policies, and the option to opt out of data usage altogether.

- Who Should Consider This: Those handling confidential documents, proprietary business processes, or sensitive client data. Lawyers, for instance, might prefer the enterprise offering if they frequently need to process large volumes of privileged information. However, we should note that it is not certain that this Input & Content is secure. Currently, until further notice we would still advice everyone – especially lawyers, doctors, government employees and other that have access to sensitive information not to add such information in the AI models.

- Opt-out: If you do not want your data contributing to AI model improvements, go to the following link to opt-out: https://lnkd.in/dVPcMfH8

4. Comparing Other AI Models: Claude and Perplexity

Next to ChatGPT there are many other AI models that are used. As we will handle Gemini next time, we will go into the details on Claude and Perplexity in this Article.

Claude (by Anthropic)

- Focus on Safety: Claude is well-known for its emphasis on AI safety and ethical guidelines. However, terms regarding data usage can still allow the model to analyze or store user inputs for system improvement unless otherwise specified. See the Consumer Terms of Service of Claude on this point. See image below – I add the relevant part whether it will be able to use your data.

- Paid Services: Anthropic offers enterprise solutions as well, which comes with a separate set of Commercial Terms containing stricter confidentiality and privacy protections. They have also added the following text in the Commercial Terms of Service: “Anthropic may not train models on Customer Content from paid Services“. Always check the most recent Terms of Service for precise details.

Perplexity

- Research-Oriented: Perplexity is built around providing concise, sourced answers. It has been explained to me as more the “Google” way of searching in AI models. It has been said that Perplexity may not store or use data exactly like ChatGPT. However, the Terms of Service seem to indicate that Perplexity will use your Content – amongst others – for training purposes. See relevant part of Art. 6.4 (b) here: “Accordingly, by using the Service and uploading Your Content, you grant us a license to access, use, host, cache, store, reproduce, transmit, display, publish, distribute, and modify Your Content to operate, improve, promote and provide the Services, including to reproduce, transmit, display, publish and distribute Output based on your Input. “ See image below – I add the relevant part whether it will be able to use your data.

- Enterprise Terms of Service: See these specific terms here. Enterprise customers do provide a license to Perplexity regarding their content. However, the following important wording is added: “Notwithstanding the foregoing, Perplexity does not and will not use Customer Content to train, retrain or improve Perplexity’s foundation models that generate Output.”

5. What Not to Upload to AI Tools

Whether you’re using ChatGPT, Claude, Perplexity, or any other AI platform, apply common sense and caution. Avoid sharing for example:

- Confidential details (client, business or family member names, private letters, contracts & strategies).

- Personally identifiable information (PII) (addresses, phone numbers, medical records).